AI and ML developers bump into the same rough edges of system orchestration that nearly every developer encounters. Whether it's management of complex data pipelines, job coordination across GPU resources, failure handling, or deploying a model, the challenges are real.

What might be a little different for the AI/ML developer is the pressure to deliver. The space is active, and it seems a new AI company is born every week. Velocity is critical as these companies seek to out-innovate each other in a land grab for attention. In this environment, speeding up your team’s ability to iterate and learn is a meaningful advantage.

Temporal and AI in 2026: Orchestrating the modern ML stack#

Temporal provides a code-first approach to tackle these orchestration challenges head-on. It allows you to not only build more reliable services, but also to build them faster, which is probably why we see Temporal being used by so many AI companies today. As of this writing, we have over 90 companies with a .ai domain using Temporal Cloud, and we know that there are many, many more running Temporal on their own.

Broadly speaking, we see two patterns across these teams for their workloads being built with Temporal:

- Orchestration of End-to-End AI/ML Processes

- Management of AI/ML Data Flows

Orchestration of End-to-End AI/ML Processes#

Many of our AI and ML customers use Temporal in place of coding state machines and writing complex rollbacks or retries when things fail. We’ve seen many examples of this, including this Document Processing Pipeline example and this Context Injection example. Descript is another AI platform using Temporal in this way.

Descript uses AI to enhance video, transcribe, and generate humanlike voice#

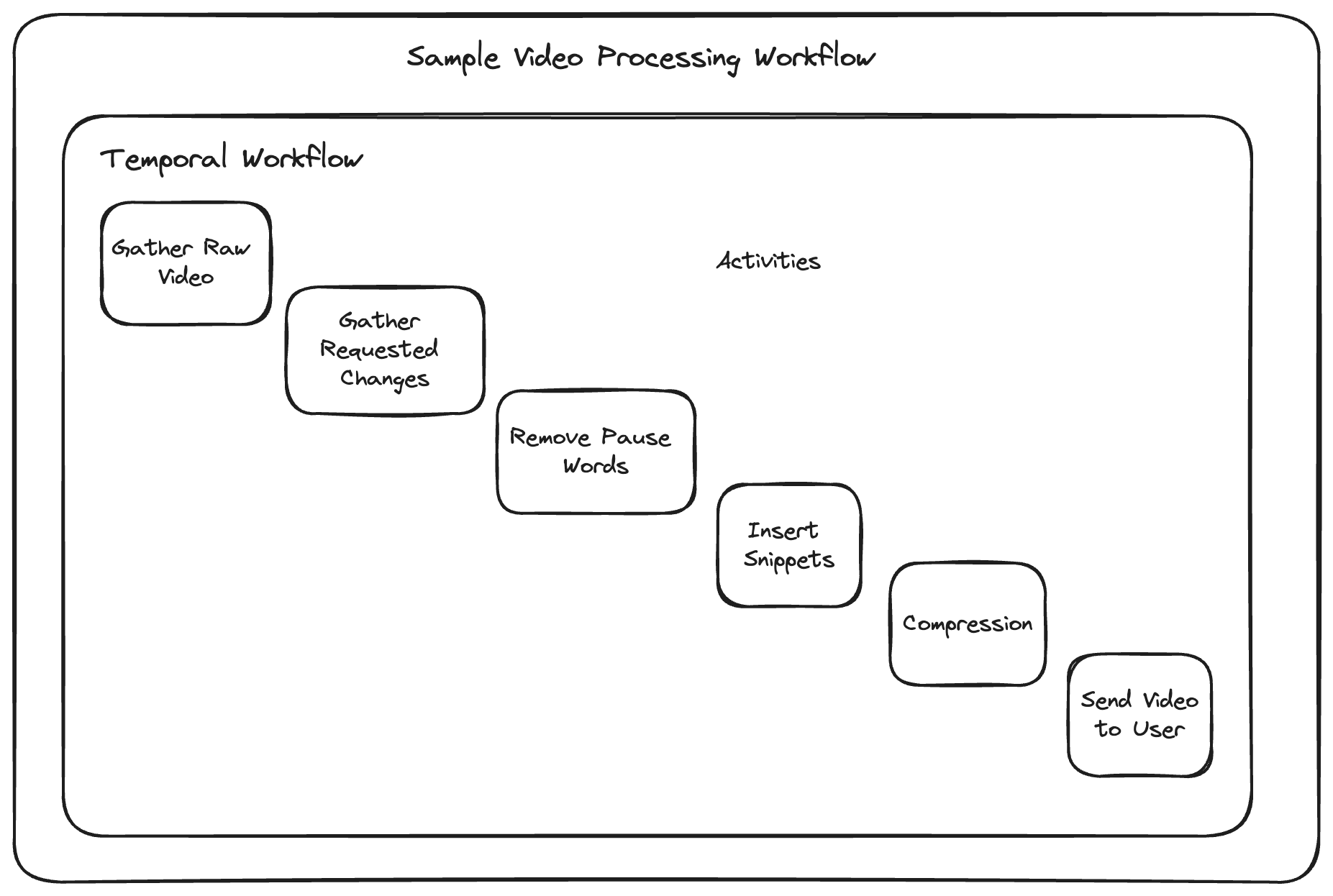

Descript uses AI to apply enhancements to video, to obtain transcripts and build out AI-generated voice libraries. With Descript, you can remove "ums' and "ahs" from a video, and add sentences with your own personal AI-generated voice. Processing the video, training the voice, inserting the sentence and then processing the final video is orchestrated by a Temporal Workflow. When the Descript team needs to iterate on this process, they simply update the Workflow code.

Temporal guarantees this process executes successfully by automatically retrying failures until they succeed, and handling long-running tasks.

Management of AI/ML and data engineering flows#

Another common pattern we see with AI use cases is the management of complex data pipelines. Ultimately, the fuel for AI/ML workflows and LLMs is data. While RAG and other approaches may help fine tune what we ask, systematic training of models and the reliable collection of data is critical. Temporal is a great fit for automating data engineering processes, and we see it often used for data flows. One such Temporal customer is Neosync.

Neosync uses AI to automate databases#

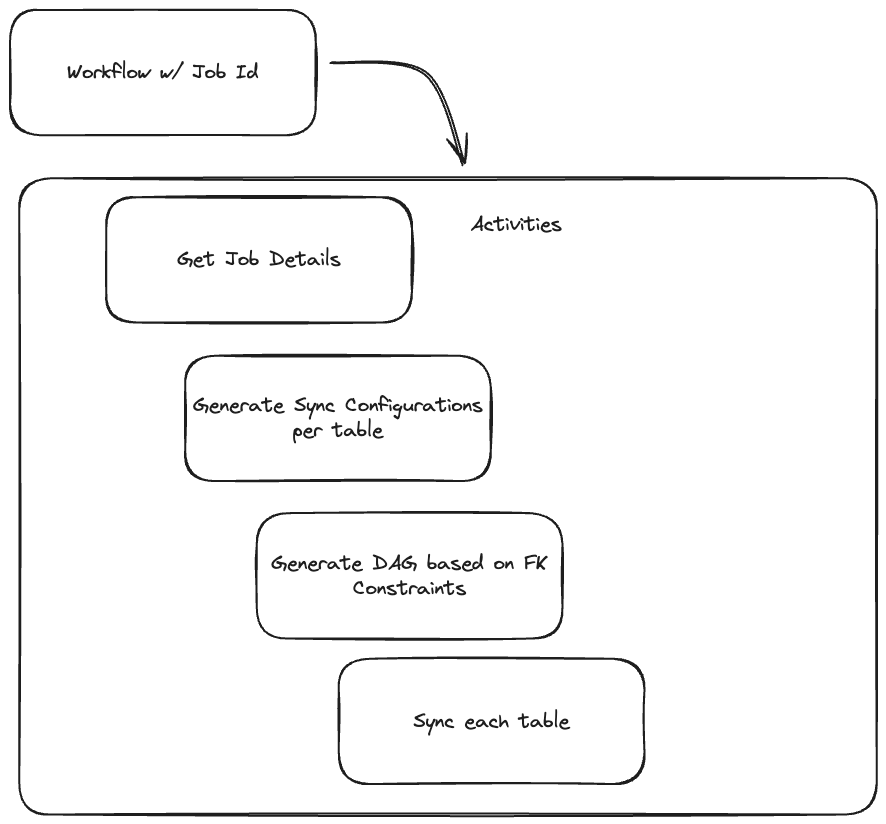

Neosync uses Temporal to automate, anonymize and generate data as well as synchronize databases. Temporal makes data engineering tasks easier for Neosync by abstracting away the complexities of process management. Developers can write an entire data pipeline as a Temporal Workflow, making it much less complex to run and understand.

“Temporal makes a lot of this easier. (With Temporal), you’re not building it all from scratch. Temporal is the event system that abstracts away the complexities of process management.” - Nick Zelei from Neosync

Temporal abstracts away the status tracking, retries, and complexity of running a fleet of data pipelines.

Temporal building AI and ML processes easier, faster, and more reliable#

Temporal's Workflow and Activity model is designed specifically for developers dealing with complex orchestration tasks. Here’s what stands out:

How Workflows and Activities woek to execute steps and manage risk#

Workflows in Temporal define the sequence of operations or steps in your process and run exactly once. They’re dynamic and can execute steps based on data and results of previous steps, which is critical in the AI/ML domain as the next steps often depend on the data.

Activities represent the individual tasks within your Workflow. They're the workhorses, designed to handle the unreliable bits of your process, like calls to external services or computations. Activities help you manage risk; Temporal automatically retries failed Activities, and you can configure these retries, along with timeouts, to fit your needs.

Example using Python: Training and testing models#

Here is a sample of a Python Workflow that trains, tests, and deploys a model.

@workflow.defn

class TrainTestDeploy:

@workflow.run

async def run(self, input: ModelInfo) -> str:

await workflow.execute_activity(

train, input, start_to_close_timeout=timedelta(hours=5)

)

await workflow.execute_activity(

test, input, start_to_close_timeout=timedelta(minutes=50)

)

await workflow.execute_activity(

deploy, input, start_to_close_timeout=timedelta(seconds=15)

)

return "successfully trained, tested, and deployed!"

You define what needs to happen and Temporal ensures it gets done, retrying tasks as needed and providing a clear record of the Workflow’s execution.

Resilience from coding failures and flexibility across programming languages#

With Temporal, you can focus more on the logic of your application and less on the boilerplate around job management, retries, and failure handling. Temporal handles the heavy lifting, making your processes more reliable and easier to manage.

- Visibility: Temporal’s UI gives a clear view of your Workflow's progress and status.

- Resilience: Failures in one step? Temporal's retry logic and state management let you pick up where things left off without starting over.

- Flexibility: Support for multiple programming languages means you can work in the environments you’re most comfortable with.

Scale your ML and agentic AI processes#

Temporal isn't just for procedural Workflows. It can handle long-running jobs, complex nested Workflows, and interact with other systems through signals for multistep processes. You can also build entity lifecycle workflows that represent a digital twin of a ML model, a data pipeline, or a dataset. This flexibility is invaluable whether you're automating data pipelines, coordinating microservices, or anything in between.

Cut GPU costs by running intensive workloads only when you need them#

With Temporal, you can define what work should be done where, so if you need to execute some steps of a process on ordinary infrastructure, such as a Kubernetes pod running on typical hardware, you can do that. But you can also define activities that are restricted to other infrastructure, such as a high-powered GPU server, through the use of Task Queues. This allows for orchestration of complex tasks without always using intense resources - instead using them only when necessary. We see teams use this ability to fire up a GPU node, run models, and then tear down the node, which can be a huge cost savings.

Run multiple AI pipelines together without rebuilding from scratch#

You can orchestrate Workflows with other Workflows to manage your whole fleet of processing. For example, the model training Workflow you saw earlier could be part of a bigger Workflow that orchestrates multiple similar processes, or even uses this process multiple times with different inputs.

If you need to interact with other systems, such as gathering user input and taking the next step in a process, you can use Signals. This is especially helpful in orchestrating a multistep interactive process, like working with a chatbot powered by AI.

tl;dr: Temporal helps AI/ML teams deliver results#

Temporal provides a robust, developer-friendly platform for orchestrating complex workflows. Its focus on code-first design, reliability, and flexibility makes it a valuable tool in the developer’s toolkit, especially for teams in the AI, ML, and data engineering fields.

Give Temporal a try for your processes and see how it can streamline your workflows, reduce operational complexity, and let you focus on building great software.